A few nights ago on The Daily Show, host Trevor Noah interviewed Mira Murati (CTO of OpenAI) about DALL$\cdot$E 2 and, more generally, the power of AI:

The whole interview is a great watch, but one thing that stood out to us is Trevor’s question at 3:50:

“So how do you safeguard them [generative models]?… We can very quickly find ourselves in a world where nothing is real, and everything that’s real isn’t, and we question it. How do you prevent, or can you even prevent that completely?”

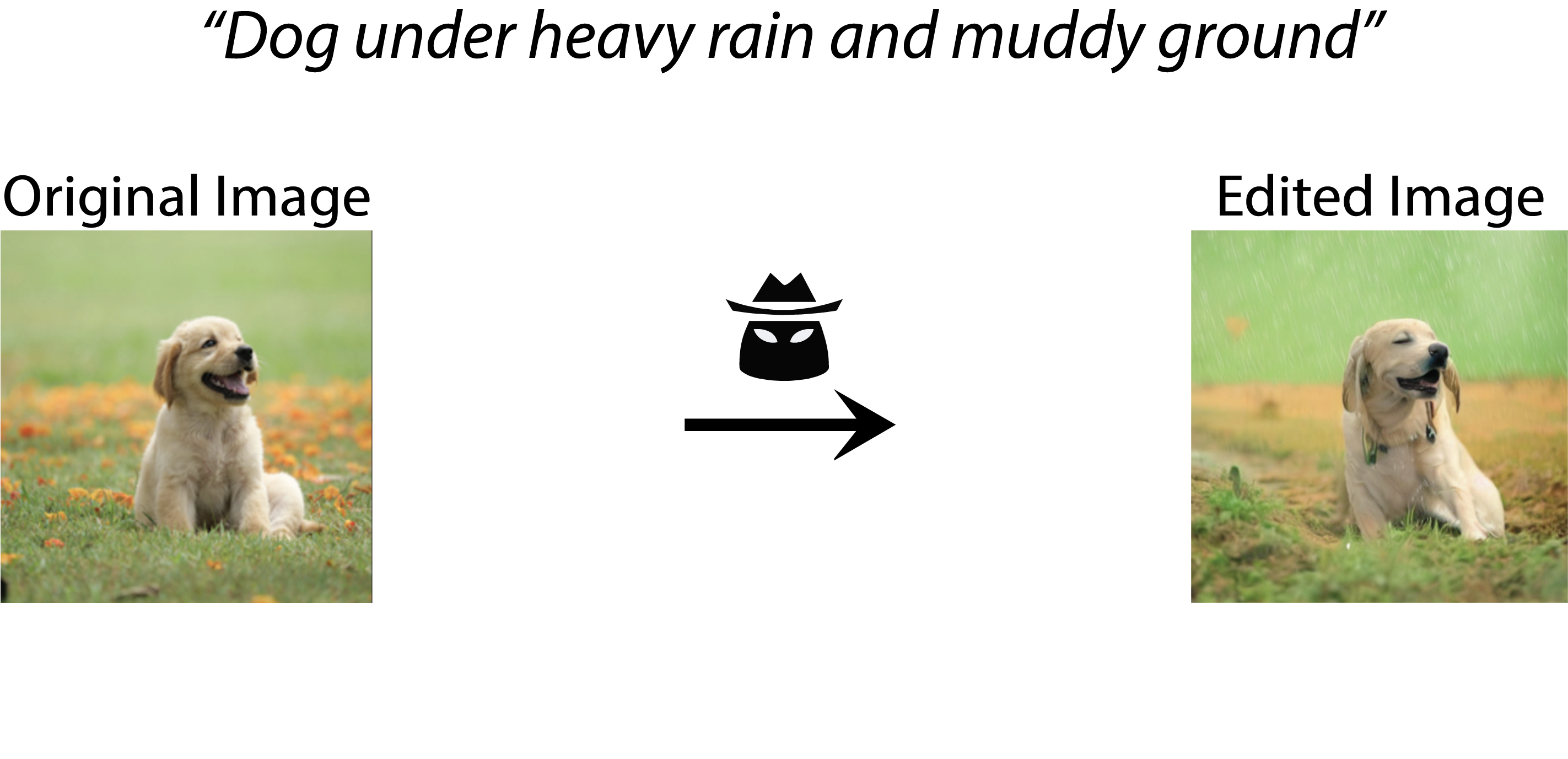

Indeed, DALL$\cdot$E (and diffusion models in general) greatly exacerbate the risks of malicious image manipulation—what previously required extensive knowledge of photoshop can now be done with just a simple natural-language query:

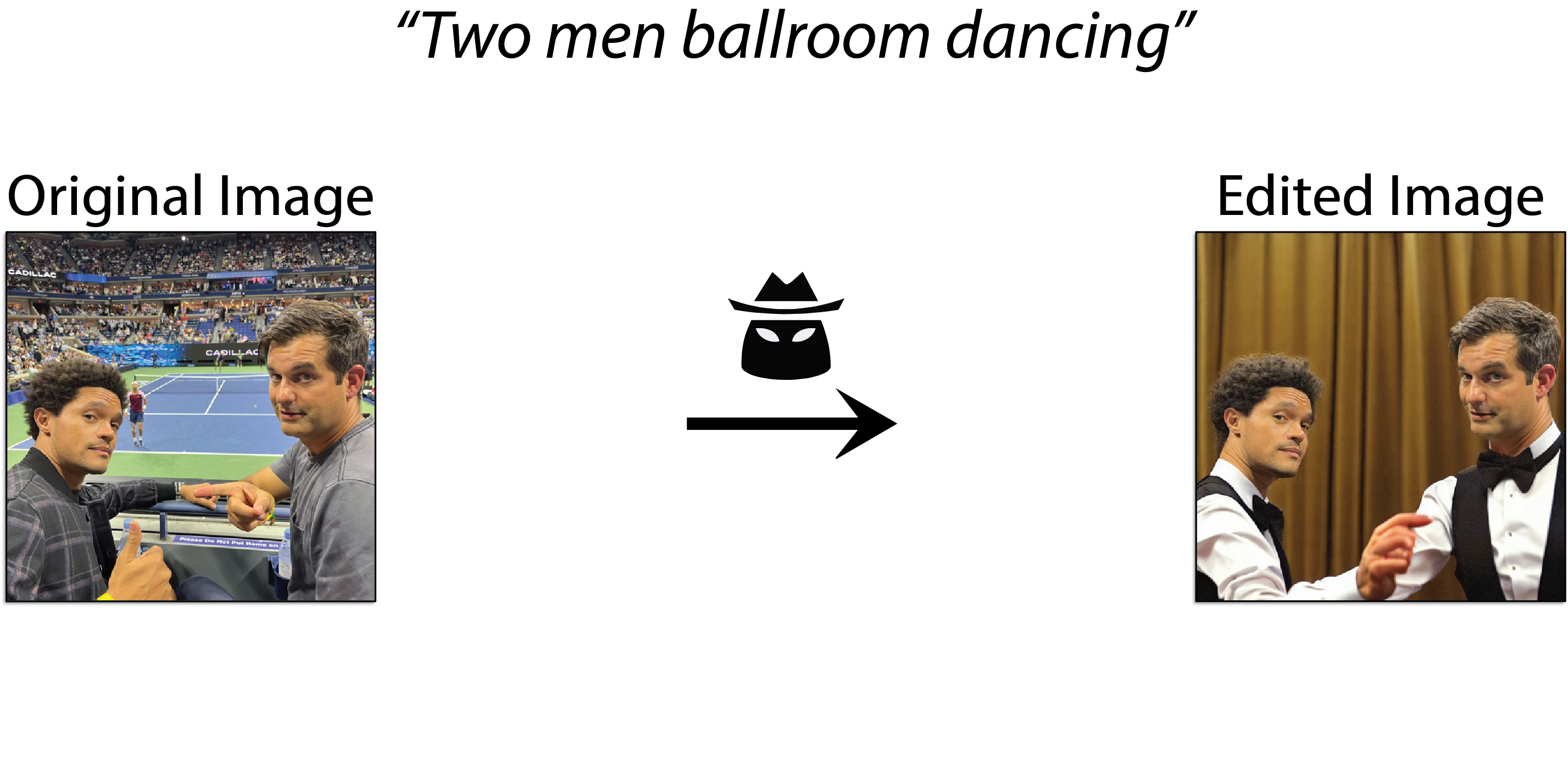

In fact, editing photos of cute dogs is just the tip of the iceberg:

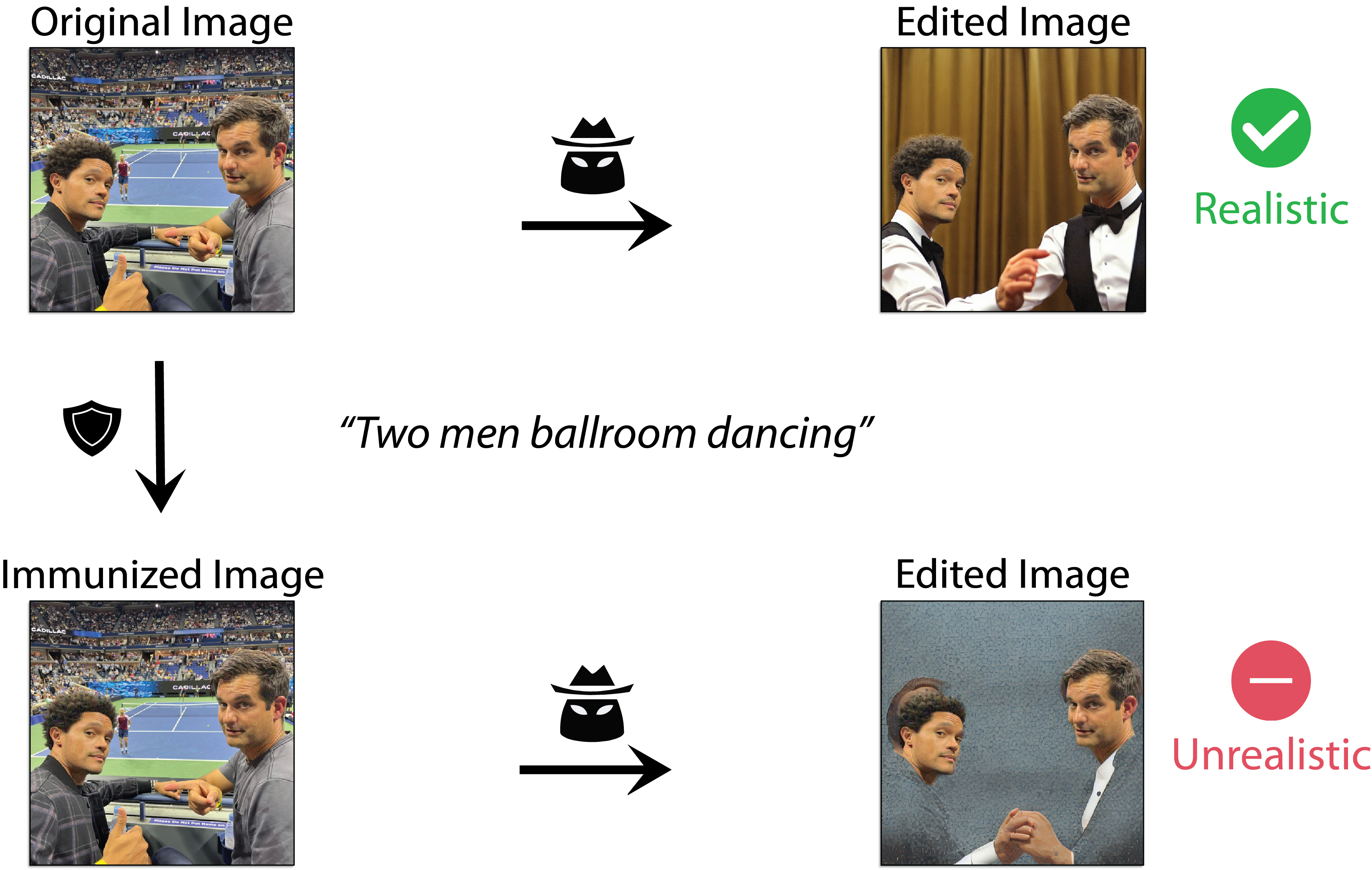

So, back to Trevor Noah’s question—is there any hope of protecting against this manipulation? We spent a few nights hacking away, and it turns out that—by leveraging adversarial examples—we can do exactly that! The details of our scheme are below, but the essence is that, by slightly modifying (imperceptibly, even) the input image, we can create a PhotoGuard, make the image immune to direct editing by generative models!

By the way, Michael Kosta is not the only person who has a selfie with Trevor. Hadi — the lead student on this project — took a selfie with Trevor couple years ago too.

Now, Hadi can leverage diffusion-powered photo editing to “deepen” his (imaginary) friendship with Trevor. If the selfie was guarded, none of this would be possible (sadly for Trevor, it isn’t)!

Some details

The core of our “immunization” process is to leverage so-called adversarial attacks on these generative models. In particular, we implemented two different PhotoGuards, focused on latent diffusion models (like Stable Diffusion). For simplicity, we can think of such models as having two parts:

- The conditioning mechanism is how the model incorporates external data such as the starting image and the prompt into its final generation. Typically, a pre-trained encoder converts the external signals to a shared embedding space—the model concatenates these embeddings and uses them as input to…

- …the diffusion process, which is responsible for generating the final image generated images. There are many good introductions to how exactly diffusion processes work, but the summary is that we start from random noise, and then repeatedly apply a model that “denoises” the input a little bit at a time.

We construct both a simple PhotoGuard targeting the conditioning mechanism, and a complex PhotoGuard targeting the end-to-end diffusion process itself.

Simple PhotoGuard

In the simpler of the two, we adversarially attack only the conditioning step of the diffusion process. That is, given a starting image $x_0$, we find an image $x_{adv}$ satisfying:

\[x_{adv} = \arg\min_{\mid\mid x - x_0 \mid\mid \lt \delta} \mathcal{L}(z_x, z_{targ})\]where $z_x$ is the embedding of the input $x$, and $z_{targ}$ is a fixed embedding. We set $z_{targ}$ to the all zeros vector (or even to an embedding of a random image), causing the diffusion model to completely ignore the starting image and focus only on the prompt.

Complex PhotoGuard

However, we find that we can do an even stronger guard! Here, we modify the starting image with the goal of breaking the whole end-to-end diffusion process. Because the diffusion process is iterative and involves repeated application of a network, taking gradients through the diffusion process is memory-intensive. We found that differentiating through only four denoising steps was enough to throw off the entire diffusion process. With a little engineering, we were able to fit four steps onto a single (A100) GPU. As you can see, editing our immunized/defended photos lead to much clearer fake images than the previous guard.

Here are some examples of fake photos, with and without our “immunization!”

Takeaways and Future Work

So, using relatively simple techniques relating to adversarial examples (and about a week’s worth of hacking), we were able to protect images against manipulation from diffusion-based generative models. That said, this is just the beginning, and there are still many unanswered questions!

- We only constructed these examples by using an open-source diffusion model (from HuggingFace). Is it be possible to make them with only black-box access to the model?

- Our complex PhotoGuard uses a lot of memory (we could only fit four diffusion steps onto a single GPU). Meanwhile, recent work shows that for some diffusion processes, one can obtain the gradient through the entire diffusion process by solving a stochastic differential equation (SDE) and using a constant amount of memory. Is it possible to do something similar more generally?

- There is a huge literature on constructing robust adversarial examples. It should be possible to leverage similar techniques to make our PhotoGuards more robust to manipulation.

More generally, we’re excited about the prospect of adversarial examples being used for forcing intended behavior, rather than for exploiting vulnerabilities (a phenomenon also seen in our work on unadversarial examples!).